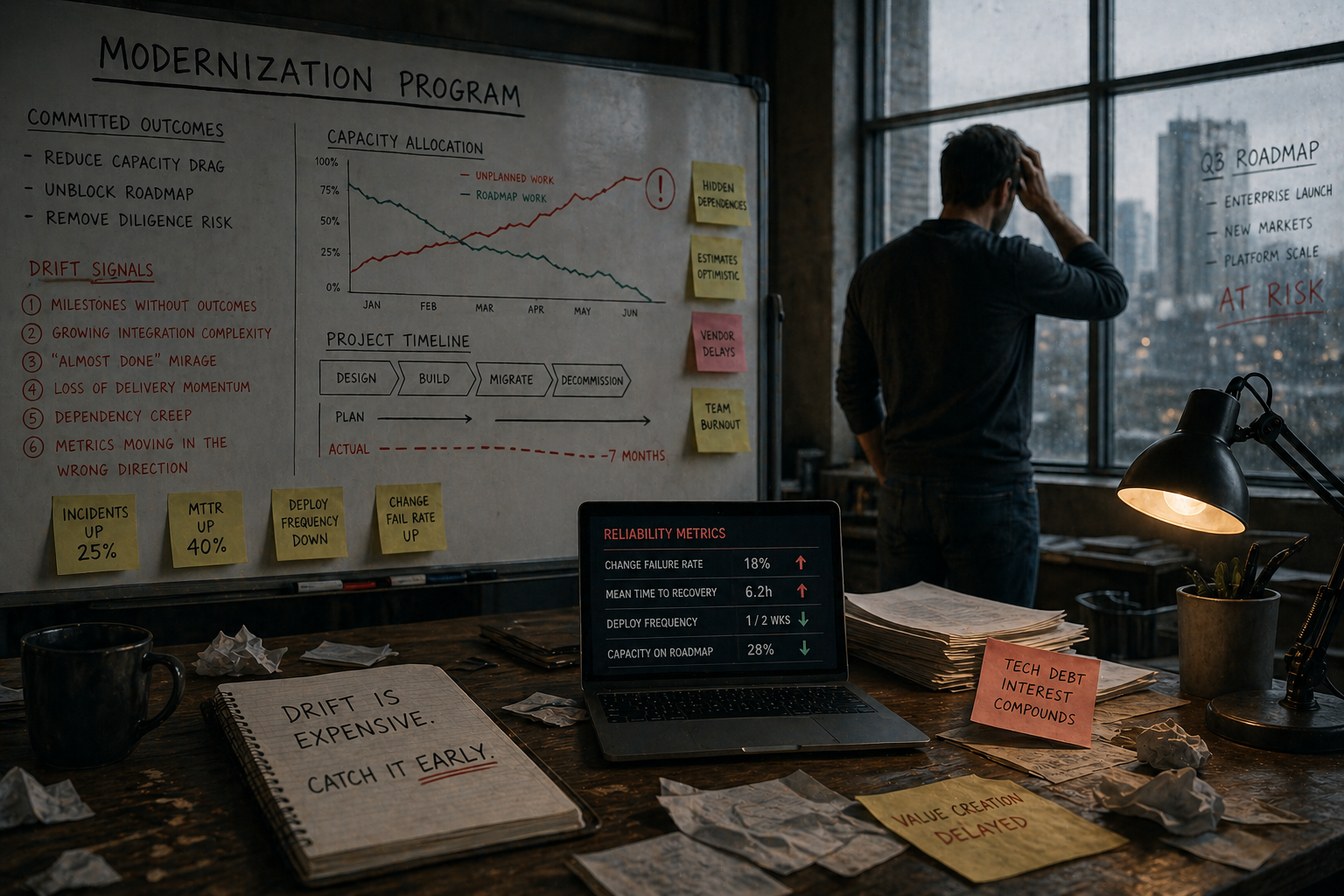

The third article in a four-part series on platform modernization. Article 1 made the case. Article 2 covered the execution discipline. This one covers what happens when execution starts to slip, and how to spot it before the board does.

Most modernization failures aren’t dramatic. They don’t blow up in a release. They don’t get killed in a board meeting.

They drift.

The pitch was clean. The plan was reasonable. The team did good work in the early sprints. Six months later something is off but nobody can quite name it. Milestones are slipping. The metrics aren’t moving the way the plan said they would. The team is heads-down, but something feels off. Nine months in, someone in finance asks the question that breaks it open. What have we actually delivered?

That’s drift. It’s the failure mode for most modernization efforts.

The warning signs are visible six months before leadership acknowledges the problem. The CTOs who survive these efforts are the ones who learn to detect drift early and correct course before sunk costs make the conversation impossible.

Drift Is the Failure Mode

Article 1 made the case for modernization as reliability tax recovery. Article 2 covered the discipline of self-funding increments. This is what happens when that discipline slips without anyone noticing.

The mechanics are almost always the same. Early increments deliver. The team gets confidence. Then the work shifts to the messy middle. Edge cases surface. Hidden dependencies appear. Estimates get optimistic to keep stakeholders calm. Each increment takes a little longer than the last. Nobody flags it because no single slip looks bad.

Six months in, the project is three months behind. Twelve months in, it’s seven months behind and the team has stopped giving honest estimates because honest estimates trigger uncomfortable conversations.

By the time leadership notices, the options are limited. Capacity has been spent. The team has lost confidence. Roadmap items that were supposed to be unblocked are still blocked. The reliability tax hasn’t been recovered.

Drift is recoverable early. Late, it’s a write-off.

Six Drift Signals

These appear before leadership notices the problem. Read them quickly.

1. Milestones without outcomes

The most common drift signal is the milestone that delivers no measurable change.

“We decomposed the monolith into three services” is a milestone. “Engineering capacity allocation moved from 60/40 to 70/30” is an outcome. The first is an architectural achievement. The second is a business result.

When progress gets reported in code moved, services created, or dependencies refactored, with no movement in the business metrics from Article 1, the effort is drifting. The team may be working hard. The work may be technically sound. But it’s not recovering capacity. It’s not unblocking roadmap. It’s not reducing diligence risk.

The fix: every milestone needs an associated business metric. If the metric doesn’t move, the milestone didn’t deliver value.

2. Growing integration complexity

The team extracts a service from the monolith. Now the monolith calls the service. The service needs data from the monolith. A synchronous API gets built. Then another. Then compensation logic for when one side is down. Then retry logic. Then distributed transaction handling.

Within six months, the system is more complex than it was before modernization started. Incidents go up instead of down. The team that was supposed to move faster is now debugging network timeouts and data inconsistency issues that didn’t exist in the monolith.

This is drift caused by extracting before encapsulating. The boundary wasn’t proven. Now you have all the operational complexity of a distributed system and none of the team independence benefits.

The fix: stop extracting. Go back to encapsulation. If the boundary can’t be proven clean within the existing system, it won’t be clean when distributed.

3. The “almost done” mirage

The last 20% takes 80% of the time. This isn’t an exaggeration. It’s a pattern.

Early migrations are easy. Clear boundaries. Well-understood workflows. Minimal edge cases. The team ships the first few increments quickly and the burndown looks great.

Then the work hits the messy middle. Shared state that multiple subsystems depend on. Undocumented business rules embedded in stored procedures. Edge cases that only trigger under specific customer configurations. Each remaining increment takes longer than the last.

The team keeps reporting “three sprints away” while the actual completion date slides. If they’ve been saying “three sprints away” for the last four months, the plan is wrong. That’s not progress. That’s drift wearing progress as a costume.

The fix: re-estimate from the ground up. Don’t start with what’s been completed. Start with what remains, broken down to individual tasks, with honest effort estimates. Add 50% buffer for the unknowns. If the new timeline is unacceptable to the business, scope shrinks or the effort pauses. There’s no third option.

4. Loss of delivery momentum

Modernization shouldn’t stop feature delivery. It might slow it down. But if the organization goes consecutive quarters without shipping anything visible to customers, engineering loses its connection to the business.

The board notices this faster than anything else on this list. Quarterly reviews scrutinize roadmap delivery against commitments. When that line goes flat for two quarters, the financial sponsor starts asking what engineering is actually doing.

Inside the company, the cycle gets worse. Product teams get frustrated. Sales gets frustrated. Customer success gets frustrated. Engineering feels defensive and isolated. Morale drops. The people who understand the legacy system best start leaving, taking institutional knowledge with them. The drift accelerates.

The fix: hard fork capacity. 60% to modernization, 40% to feature delivery, enforced at sprint planning. If that ratio makes the modernization timeline unacceptable, the business needs to make an explicit choice. Accept the longer timeline. Accept the operational risk of the legacy system. Or pause modernization entirely. All three are valid. Drifting along while pretending the math works is not.

5. Vendor lock-in during transition

Some modernizations replace one vendor with another. An aging SaaS swapped for a newer one. Self-hosted infrastructure moved to a managed service.

The drift pattern: 60% of workflows migrate to the new vendor in the first year. The remaining 40% is complex and keeps getting deprioritized. The company is now paying for two platforms, neither of which can be turned off. Costs go up, not down. Operational burden increases because incidents can happen in either system.

Worse, the new vendor starts asserting more leverage. Contract renegotiations. Price increases. Feature deprecations that require rework. The company has less leverage because the migration is incomplete and painful to reverse. The diligence story gets worse, not better.

If old and new platforms have been running in parallel for more than two quarters with no clear decommission plan, you’re drifting toward permanent dual-platform cost.

The fix: set a hard cutover date and work backward. If complete migration isn’t feasible by that date, scope shrinks. Identify the workflows that will remain on the old platform and renegotiate the contract for that reduced footprint. Don’t let parallel state become permanent.

6. Operational metrics moving the wrong way

The modernization effort is supposed to recover the reliability tax. If change failure rate is going up. If MTTR is getting longer. If incident hours are trending up instead of down. The effort is making things worse.

This usually happens when teams ship modernized components without adequate observability, testing, or rollback capability. The new code is less stable than the old code it replaced. Customers notice. Support escalates. The board sees customer-impacting incidents trending up at the same time roadmap delivery is trending down. That’s the worst of both worlds.

If the four core engineering metrics from Article 1 are regressing for two consecutive quarters, the modernization isn’t drifting anymore. It’s actively destroying value.

The fix: stop shipping new increments until the operational discipline catches up. Invest in characterization tests, observability instrumentation, and release tooling before continuing. A slower, more stable modernization is better than a fast one that destroys trust.

What Detection Looks Like in Practice

A modernization I ran at a previous company hit its first release in 13 weeks. It worked. The reasons it worked are the inverse of the warning signs above.

The first increment was a feature-driven microservice that delivered a customer-facing capability while doubling as the proving ground for the broader architecture. Encapsulate before extract. Self-funding from week one. The financial model was pressure-tested by Sales, Partnerships, IT, and Finance before we wrote a line of code. Every milestone had a business outcome attached.

What kept it from drifting wasn’t luck. It was being uncomfortably honest at the weekly reviews. When estimates started to look optimistic, we re-estimated from the ground up. When integration complexity started growing, we paused and asked whether the boundary was proven. When operational metrics moved the wrong way, we stopped shipping until they corrected.

None of that felt good in the moment. Every honest re-estimate was a difficult conversation. Every pause to fix observability looked like slowdown. But it kept the project from drifting into the messy middle that kills most modernization efforts.

The discipline isn’t avoiding drift. Drift is normal. The discipline is detecting it within weeks instead of quarters.

The Hardest Sentence in a Board Meeting

The hardest decision in a failing modernization isn’t technical. It’s saying out loud: “We need to pause this.”

Engineering teams are wired to finish what they started. Six months of work feels like too much to abandon. Leadership has already sold the board on the initiative. Admitting drift is uncomfortable. Admitting it might be unrecoverable is worse.

But continuing a drifting modernization is almost always more expensive than stopping it. The capacity drag compounds. The roadmap stays blocked. The diligence story gets worse every quarter the work continues without delivering.

When you spot drift, there are three options. The criteria for each are different.

Continue if:

- Engineering capacity allocation is still moving toward roadmap (even slowly)

- Milestones are delivering business outcomes, not just architectural changes

- The team has maintained delivery momentum on customer-facing features

- The financial sponsor and board still have confidence in the plan

Pivot if:

- Scope can be meaningfully reduced while still delivering value

- Sequencing can front-load the highest-ROI increments

- Additional capacity (contractors, build partner, offshore team) can compress the timeline

- Honest re-estimation produces a credible timeline the board will accept

Stop if:

- The revised timeline extends past the exit window

- Remaining work would consume more than 50% of engineering capacity for the next 12 months

- Operational metrics are regressing and the team can’t identify a credible fix

- Key technical leaders who understood the original plan have left and knowledge transfer failed

The trap is continuing when none of the “continue” criteria are met, purely because of sunk cost.

Why Modernization Efforts Actually Fail

Read enough modernization post-mortems and the root cause is almost always one of three things.

Underestimated complexity. The team didn’t fully understand the legacy system before starting to replace it. Undocumented dependencies surfaced late. Business rules buried in obscure code paths broke when extracted. The plan assumed a system that didn’t actually exist.

Inadequate testing discipline. The safety net wasn’t in place before changes started shipping. Regressions surfaced in production rather than CI. Customer trust eroded faster than the team could recover it. The reliability tax went up instead of down.

Organizational impatience. The business expected results faster than the technical work allowed. Pressure to show progress led to shortcuts. Shortcuts led to quality issues. Quality issues led to loss of confidence. Loss of confidence led to political fights about scope. Political fights led to drift.

All three are avoidable. But avoiding them requires being uncomfortably honest with the board, with the team, and with yourself. About what’s actually working. About what’s not. About what the timeline really looks like.

The Honest Mirror

The CTOs who succeed at modernization aren’t the ones who never encounter drift. Drift is normal. Every long modernization arc has it.

They’re the ones who notice it in weeks instead of quarters. They measure outcomes, not activity. They protect operational discipline even when timelines slip. They communicate honestly with the financial sponsor about risk and progress. They’re willing to say “we need to pause this” before the board says it for them.

The teams that fail keep reporting green status while the reality deteriorates. They hide drift because surfacing it is uncomfortable. They optimize for looking busy rather than delivering capacity recovery.

The cost of a failed modernization is steep. A half-working platform. A demoralized team. Lost credibility with the board. A diligence story that compresses valuation at exit.

The cost of detecting drift early and correcting course is much lower.

The next article in this series covers how AI is changing the economics of modernization. What it actually accelerates. What it doesn’t. The new failure modes it introduces. It doesn’t change the strategic principles. But it changes the labor cost enough that the talent calculus has to shift.