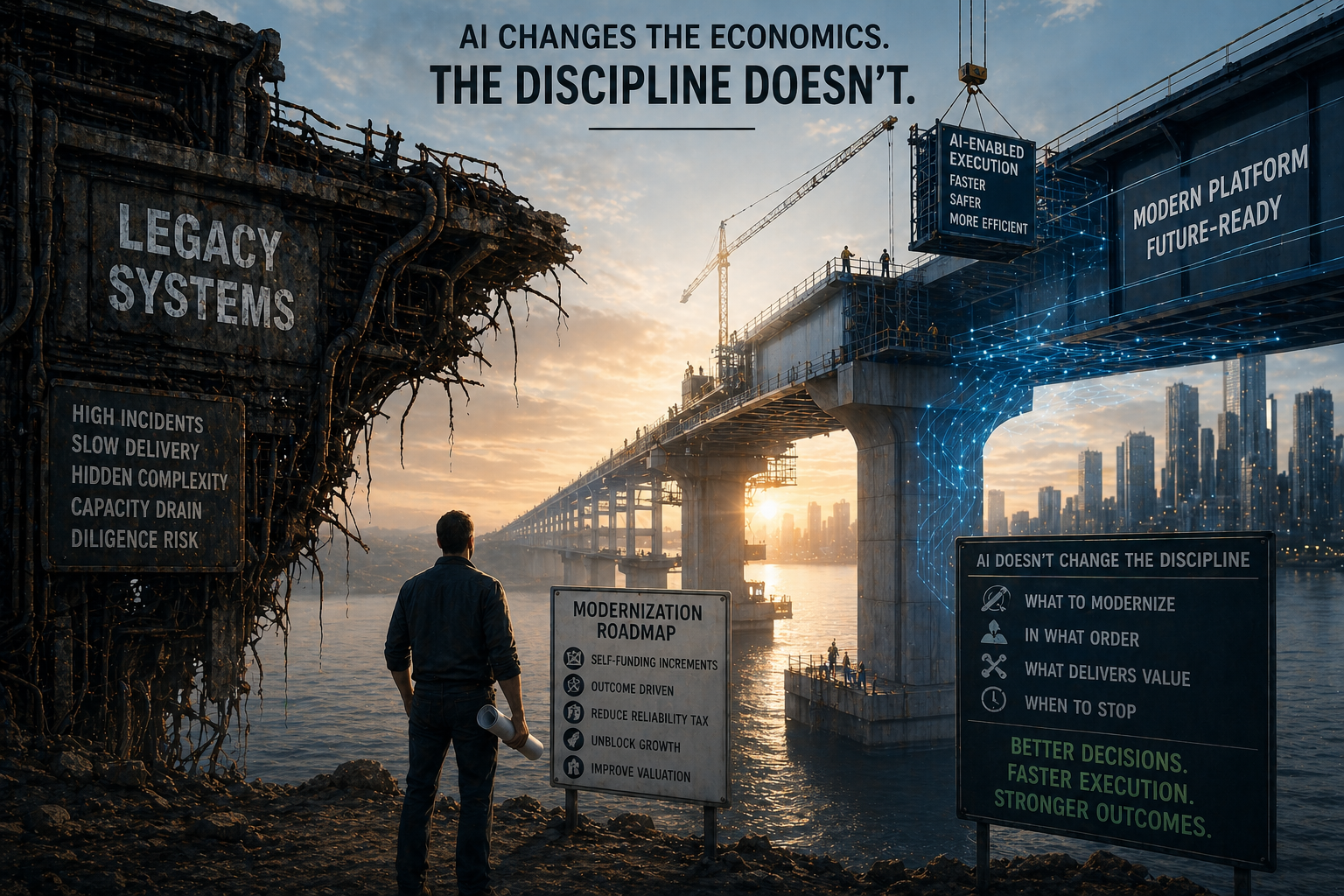

The fourth and final article in a four-part series on platform modernization. Article 1 made the case for modernization as reliability tax recovery. Article 2 covered the discipline of self-funding increments. Article 3 covered drift, the failure mode that kills most modernization efforts. This one covers what AI changes about all of it. And what it doesn’t.

The vendor pitch is everywhere. Code migration in weeks instead of months. Legacy systems understood faster than ever. Effort reductions of 60% or 70% on the mechanical work of modernization.

Most of it is true. AI is genuinely changing the cost structure of how modernization gets done.

None of it solves the hard part.

The strategic decisions that determine whether a modernization effort succeeds or fails sit untouched. What to modernize. In what order. What delivers business value. When to stop.

AI doesn’t help with any of that. It just helps you ship faster, including ship the wrong thing faster.

The companies that struggled before will now struggle faster. The companies that were disciplined before will benefit. AI is a multiplier, not a corrective.

What AI Actually Changes

The leverage is real. It just shows up in specific places.

Codebase understanding. AI can trace dependencies, summarize legacy logic, and surface patterns across millions of lines of code in hours instead of weeks. “This database table is referenced by 47 files across 12 modules. Here are the top five riskiest dependencies.” That kind of analysis used to require weeks of manual archaeology. Now it’s a same-day deliverable.

Refactoring and migration. Mechanical code translation is the highest-leverage AI application in modernization today. A portfolio company recently migrated 40,000 lines of legacy Java to TypeScript in 8 weeks. The previous attempt using manual rewrite took 6 months and was abandoned. AI handled the mechanical translation. Humans focused on architectural decisions and edge case validation.

Test generation. Article 2 made the case that incremental modernization without testing is just incremental risk. AI excels at generating characterization tests for legacy code. Feed it a function, ask for tests covering all code paths, review the output, run against the existing system. The behavior is now documented and regression-tested. The safety net that used to be the bottleneck now ships in days.

Documentation. Legacy systems accumulate undocumented complexity. Business rules buried in stored procedures. Integration patterns that exist only in tribal knowledge. AI can extract this into usable artifacts, often surfacing dependencies the original team had forgotten.

These four areas represent the real economics shift. Mechanical work that used to consume engineering capacity for months now takes weeks. The reliability tax on these specific tasks goes down.

That’s the upside. It’s significant. It also doesn’t address the failure modes from the prior three articles in this series.

What AI Doesn’t Change

The framework from Articles 1-3 is unchanged.

The reliability tax is still real. AI doesn’t decide what to fix first. It doesn’t tell you whether the integration layer or the data model is the bigger drag on engineering capacity. It doesn’t translate engineering metrics into the business outcomes the board cares about. The CTOs who didn’t understand the reliability tax before AI still don’t understand it after.

Self-funding increments still apply. AI can ship a refactored microservice in a week. That doesn’t mean the microservice paid for itself. The test from Article 2 is unchanged: does this increment recover capacity, unblock roadmap, or remove diligence risk? AI accelerates execution. It doesn’t ensure each step delivers value.

Drift is still the failure mode. And this is where AI is most dangerous. AI-accelerated modernization can disguise drift longer than traditional modernization. The team is shipping. The burndown looks great. PRs are flying through review. But the underlying business outcomes aren’t moving. Velocity without outcomes is the new drift signal, and AI makes it easier than ever to generate impressive velocity without recovering any of the reliability tax.

AI will happily help you refactor the wrong thing, faster. With better test coverage. And cleaner documentation. The modernization will look healthy right up until the board asks what got delivered.

New Failure Modes

Beyond accelerating the existing failure modes, AI introduces four new ones.

Over-modernization. Teams refactor everything because they can. AI makes refactoring cheap, so the temptation is to refactor anything that looks ugly. But more code changed isn’t more value delivered. The capacity that goes into AI-assisted refactoring of a stable subsystem is capacity that doesn’t go into recovering the reliability tax. The cost dropped, but it’s still a cost.

False confidence. AI-generated code looks right. It compiles. It passes basic tests. It integrates cleanly with existing systems. But it can miss edge cases that aren’t in the training data, break implicit behavior the original code depended on, or introduce subtle bugs that only surface in production under specific conditions. The review burden hasn’t gone down. It’s shifted from writing to validating, and validating is harder.

Drift acceleration. The detection window from Article 3 has compressed. More changes per sprint. More surface area. Faster accumulation of mistakes. Drift signals that used to surface over two quarters can now surface in two months, but only if leadership is watching. The CTOs who detected drift in weeks before will detect it in days now. The CTOs who detected it in quarters before will be quarters behind, but with significantly more code shipped.

Tool-driven strategy. This is the worst of them. Teams modernize based on what their AI tools handle well, not what the business needs. The roadmap becomes constrained by the tool rather than the value creation plan. “We modernized the parts AI was good at” is not the same as “we modernized the parts that mattered.” Operating partners reviewing modernization progress should be asking which one is true.

Modernizing With AI vs. Modernizing For AI

This is the distinction most teams miss.

Modernizing with AI means using AI to refactor and migrate existing systems. Code translation. Test generation. Dependency analysis. The work in section 2 above. This is what most teams are doing right now, and it’s where the vendor pitches are focused.

Modernizing for AI means restructuring the platform to support what AI workloads will actually require. Clean data access. Real-time observability. Modular service boundaries that AI agents can compose. APIs designed for programmatic consumption rather than human workflows. This is the harder work, and it’s where the durable competitive advantage lives.

Most teams are using AI to modernize the past. The bigger opportunity is modernizing the platform for what AI will require next.

A portfolio company that uses AI to refactor a 12-year-old monolith into cleaner services has saved engineering capacity. Real value. But the company that restructures its data layer, service boundaries, and observability infrastructure to enable AI-native product features has built something durable. The diligence story for the first is “we paid down some technical debt.” The diligence story for the second is “we built the platform the next decade of AI products will run on.”

Both have a place. But conflating them is expensive.

The Discipline Doesn’t Change

The framework from this series is unchanged.

The reliability tax still has to be quantified before the board funds modernization. Self-funding increments still have to pay for themselves. Drift still kills modernization efforts that don’t catch it early. AI doesn’t replace any of those decisions. It just changes how fast they get executed.

One new rule for the AI era: use AI to accelerate the increments, not redefine them. The unit of progress is still the self-funding increment that pays for itself in capacity, roadmap, or diligence terms. AI changes how fast that increment ships. It doesn’t change what makes it valuable.

The companies that win this next decade won’t be the ones that adopted AI fastest. They’ll be the ones that applied it with the discipline modernization has always required. Knowing what to modernize. In what order. What delivers business value. When to stop.

The vendor pitches will continue. The hype will compress. The CTOs who treat AI as a multiplier on existing discipline will recover the reliability tax faster, ship self-funding increments more efficiently, and detect drift earlier than their peers. The CTOs who treat AI as a substitute for discipline will modernize the wrong things, faster, with better test coverage, and call it progress.

Reliability tax. Self-funding increments. Drift. AI economics. Together they’re the framework I’d use to evaluate any modernization effort, whether I was running it, advising on it, or sitting on the board reviewing it.

Modernization is hard. It always has been. AI doesn’t change that. It just raises the cost of getting it wrong, and the upside of getting it right.