Earlier this year I published a four-part series on platform modernization. Reliability tax. Self-funding increments. Drift. AI economics. Together they’re the framework I’d use to evaluate any modernization effort. This piece is about the seam where modernization meets AI governance, and why most companies are getting that seam wrong.

AI governance is widely treated as a compliance function. A separate workstream. Privacy reviews. Model cards. Acceptable use policies. An AI committee that meets quarterly. Documentation for the next audit cycle.

For a PE-backed company running platform modernization with AI tooling, that framing fails.

The governance decisions that matter most aren’t getting made in the AI committee meeting. They’re getting made in sprint planning, in code review, in the architecture decisions that shape what AI tools can and can’t see, and in the modernization sequencing that determines which legacy systems get rebuilt with AI assistance and which get left alone.

Governance has to be embedded in modernization decision-making, not bolted on after.

This is the seam most companies are getting wrong.

Why Bolted-On Governance Fails

The bolted-on model assumes AI governance is a review function. Engineering builds. Governance reviews. Approve, reject, revise. The cadence is monthly or quarterly. The output is documentation.

This works for low-stakes AI features. A chatbot. A summarization tool. A recommendation engine. The governance question is about output quality and acceptable use, and a quarterly review can handle it.

It breaks down completely when AI is embedded in the engineering process itself.

When AI is generating code, refactoring legacy systems, writing tests, and analyzing dependencies, the governance questions aren’t about output quality. They’re about the integrity of the work product. What did the AI actually do? What did it miss? What did the team validate, and what did they assume? Where is the audit trail? When the platform fails six months from now, will anyone be able to reconstruct why a particular decision was made?

Those questions can’t be answered by a quarterly review. They have to be answered in the moment, by the people doing the work, using practices that are part of how modernization gets executed.

That’s what embedded governance means. But “embedded governance” is a soft phrase that hides what’s actually being built.

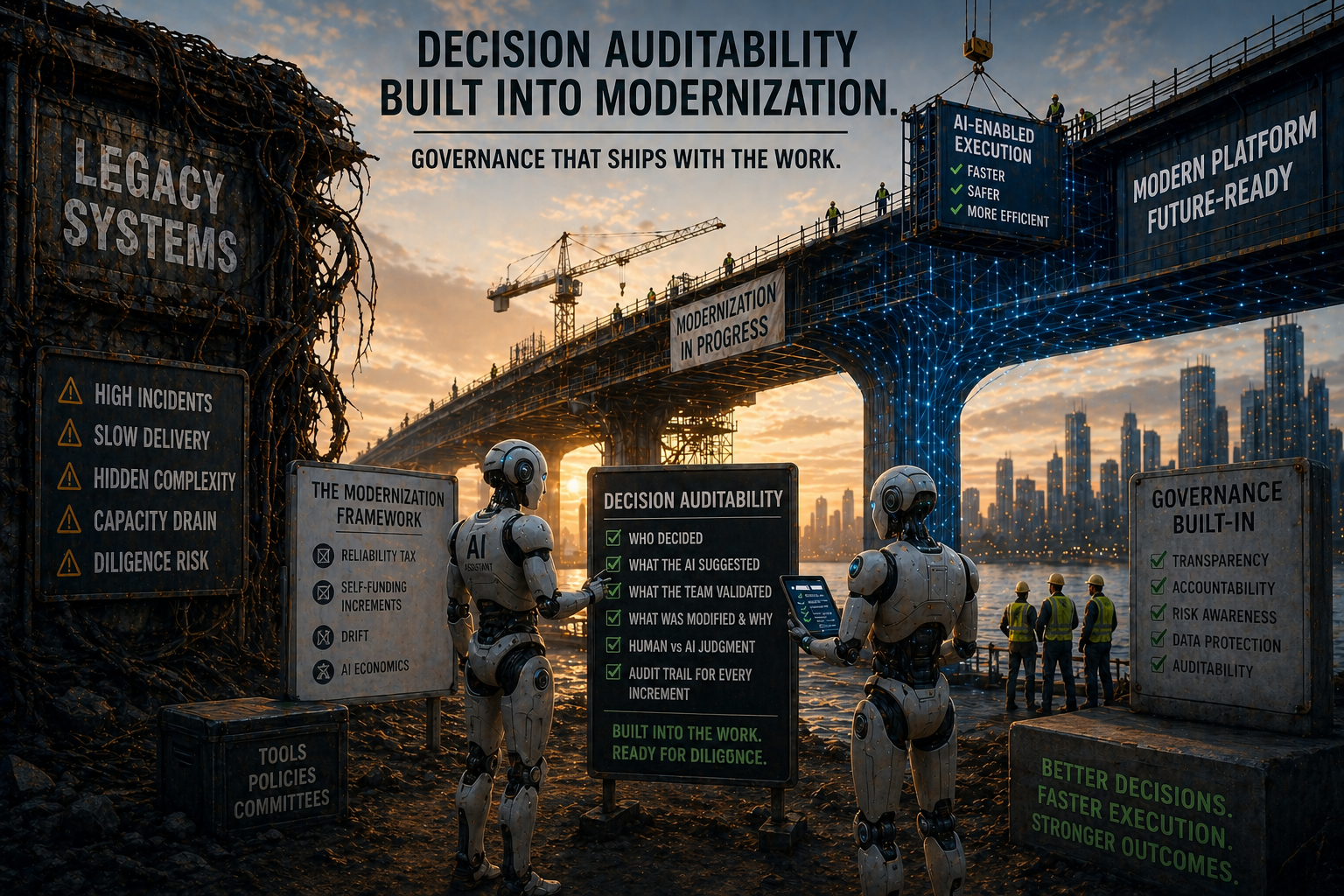

The Concept That Matters: Decision Auditability

Embedded governance is not more documentation. It is decision auditability built into the modernization process.

Decision auditability means that when something fails, when a buyer asks a hard question, when a regulator requests evidence, the team can reconstruct what happened. Who decided. What the AI suggested. What the team validated. What was modified, and why. Where the boundary sat between AI judgment and human judgment.

Bolted-on governance produces artifacts that exist next to the work. Decision auditability is a property of the work itself. The audit trail is not assembled retroactively for a compliance review. It exists because the team built it in as they went.

This distinction matters for three reasons.

It changes what gets tracked. Bolted-on governance tracks policies and approvals. Decision auditability tracks decisions. The shift sounds subtle. In practice it changes which artifacts the team produces, which conversations happen at code review, and which fields appear in the engineering tracking system.

It changes who is accountable. Bolted-on governance puts accountability on a committee that meets quarterly. Decision auditability puts accountability on the engineers and leads making decisions in the moment. That accountability has to be supported by tooling, training, and authority, but it sits with the people doing the work.

It changes the diligence story. A buyer’s technical due diligence team asking “how was AI used in the codebase” gets a different answer from a company with bolted-on governance versus one with decision auditability. The first answer is “we have policies and a committee.” The second answer is “here’s the audit trail for every increment that shipped with AI involvement.” The first answer triggers deeper diligence. The second answer closes the question.

Decision auditability is the working concept the rest of this article keeps coming back to.

The Four Series Anchors, Through a Governance Lens

The four-part modernization series laid out a framework. Each part has a governance dimension, and decision auditability is what connects them.

The reliability tax has a governance dimension. Article 1 made the case that modernization gets funded by quantifying engineering capacity drag. AI accelerates the recovery work, but it also introduces a new tax: the cost of validating AI-generated code, AI-generated tests, and AI-generated architecture suggestions. Companies that count the recovery without counting the validation overhead are mispricing the work. Decision auditability means tracking validation effort as a first-class engineering metric, not as an afterthought in a compliance report.

Self-funding increments have a governance dimension. Article 2 argued that every modernization step has to pay for itself in capacity, roadmap, or diligence terms. When AI is doing the work, the diligence dimension changes. An increment that ships with AI-generated code and inadequate validation can recover capacity in the short term while creating diligence liability for the long term. The buyer’s technical due diligence team will ask how the code was generated, how it was reviewed, and what the audit trail looks like. Decision auditability means treating the AI-generated provenance of code as part of the increment’s value test, not as a downstream compliance concern.

Drift has a governance dimension. Article 3 was about the failure mode where modernization efforts ship velocity without business outcomes. AI-accelerated modernization compresses the drift detection window. More changes per sprint. More surface area. Faster accumulation of decisions that nobody is fully tracking. Decision auditability means keeping a record of why specific AI suggestions were accepted, rejected, or modified, so that when drift surfaces, the team can reconstruct what happened.

The economics shift has a governance dimension. Article 4 argued that AI changes the speed of modernization, not the discipline. The governance equivalent: AI changes the speed at which decisions get made, not the discipline of making them. Companies that adopted AI tooling without updating their decision-making cadence will find that engineering decisions are now happening faster than governance can review them. The result is decision drag, where good engineering judgment is bottlenecked by quarterly review cycles, or governance drift, where decisions ship without review at all. Both are bad. Decision auditability is the only path that doesn’t force a choice between them.

Four Questions for Embedded Governance

If governance has to be embedded in modernization decisions, the practical question is what that looks like in practice. These four questions, asked at the increment level, separate embedded governance from compliance theater.

1. What did the AI actually do, and what did the team validate?

Every increment that uses AI tooling should have a clear record. What was AI-generated. What was human-written. What was AI-generated and then human-modified. What was validated by tests, code review, or production observation. What was accepted on faith because the team trusted the tool.

This isn’t bureaucracy. It’s the decision auditability that lets the team reconstruct what happened when something fails six months later. Without it, post-mortems become guesswork and diligence reviews become exposures.

2. Where could AI suggestions silently degrade the system?

AI tools are good at producing code that looks right. They’re worse at preserving subtle behavior the original code depended on, edge cases that weren’t in the training data, and integration patterns that exist only in tribal knowledge.

Embedded governance means identifying the parts of the system where silent degradation is most costly. Security boundaries. Financial calculations. Regulated workflows. Data integrity guarantees. Those areas need tighter validation discipline. The bolted-on equivalent is a single AI usage policy that treats all code paths equally. That doesn’t reflect how risk actually distributes.

3. Are decisions being made faster than they’re being reviewed?

This is the cadence question. If sprint planning happens weekly but the AI usage review happens quarterly, you’re making 13 weeks of AI-assisted decisions for every one governance touchpoint. That’s not governance. That’s documentation after the fact.

Embedded governance means decision review happens at the cadence decisions are being made. For most modernization efforts, that means weekly or bi-weekly, not monthly or quarterly. The review can be lightweight, but it has to be timely.

4. Will the diligence story hold?

Buyers are starting to ask AI-specific questions in technical due diligence. How was AI used in the codebase? What’s the validation discipline? What’s the audit trail? What happens to the platform if the AI tooling vendor changes terms or goes away?

Companies that can answer these questions cleanly will move through diligence faster and command better valuations. Companies that can’t will face deeper diligence, longer hold periods, or discounted exits. Decision auditability is what makes the difference.

Why This Matters Now

AI governance has been treated as a regulatory question. EU AI Act compliance. NIST framework alignment. State-level disclosure requirements. Those matter, but they’re table stakes. They tell you what you have to do to stay legal.

The competitive question is different. PE-backed companies entering diligence over the next 24 to 36 months are going to face buyer-side technical due diligence that includes AI-specific scrutiny. How was AI used in the platform? What was the validation discipline? What’s the dependency on AI tooling vendors? What happens to engineering capacity if the AI productivity assumptions don’t hold?

Companies with embedded governance will answer those questions cleanly because the answers are properties of how the modernization was executed. The audit trail exists because it was always there.

Companies with bolted-on governance will answer those questions with compliance documentation that doesn’t actually map to the engineering decisions that shaped the platform. Buyers will notice. Diligence will deepen. Valuation will adjust.

This is going to become a diligence dimension. The companies that figure it out early will benefit. The companies that wait until a buyer asks will be reconstructing two years of decisions under time pressure.

Decision Auditability Is the Discipline

The four-part modernization series laid out a framework for running modernization in any constrained environment. Reliability tax. Self-funding increments. Drift. AI economics. Each one is a discipline.

Decision auditability is what holds them together when AI is in the work. Not a separate workstream. Not a quarterly review. Not a documentation exercise. A property of how decisions get made.

The CTOs who build decision auditability into the modernization process will recover the reliability tax faster, ship self-funding increments more cleanly, detect drift earlier, and build a diligence story that supports valuation. The CTOs who treat AI governance as bolted-on will produce compliance artifacts and hope the audit goes well.

The vendors selling AI governance platforms will pitch you on the bolted-on model. It’s easier to sell a tool than a discipline. But the discipline is what matters, and the discipline has to live inside the modernization decisions, not adjacent to them.

This is the seam. Modernization meets governance at the level of how decisions get made.