A capstone to the Managing the Digital Workforce series — the market, organized by governance layer.

Machine identities outnumber human employees 82 to 1 in the average enterprise. That’s not a forecast. That’s IBM X-Force’s 2026 count of what’s already running inside organizations today.

Most of those machine identities are ungoverned. Gravitee’s 2026 State of AI Agent Security found that only 14.4% of organizations report their agents go live with full security approval. Only 47% of agents running inside corporations are actively monitored or secured. The other half are just running.

This is the governance problem this series has been about. Nine articles, one premise: AI agents are workers, not features, and the organizations that treat them that way will have a structural advantage over those that don’t. Each article worked through one layer of what management of a digital workforce actually requires: inventory, identity, guardrails, posture, supervision, gateways, audit, and discovery.

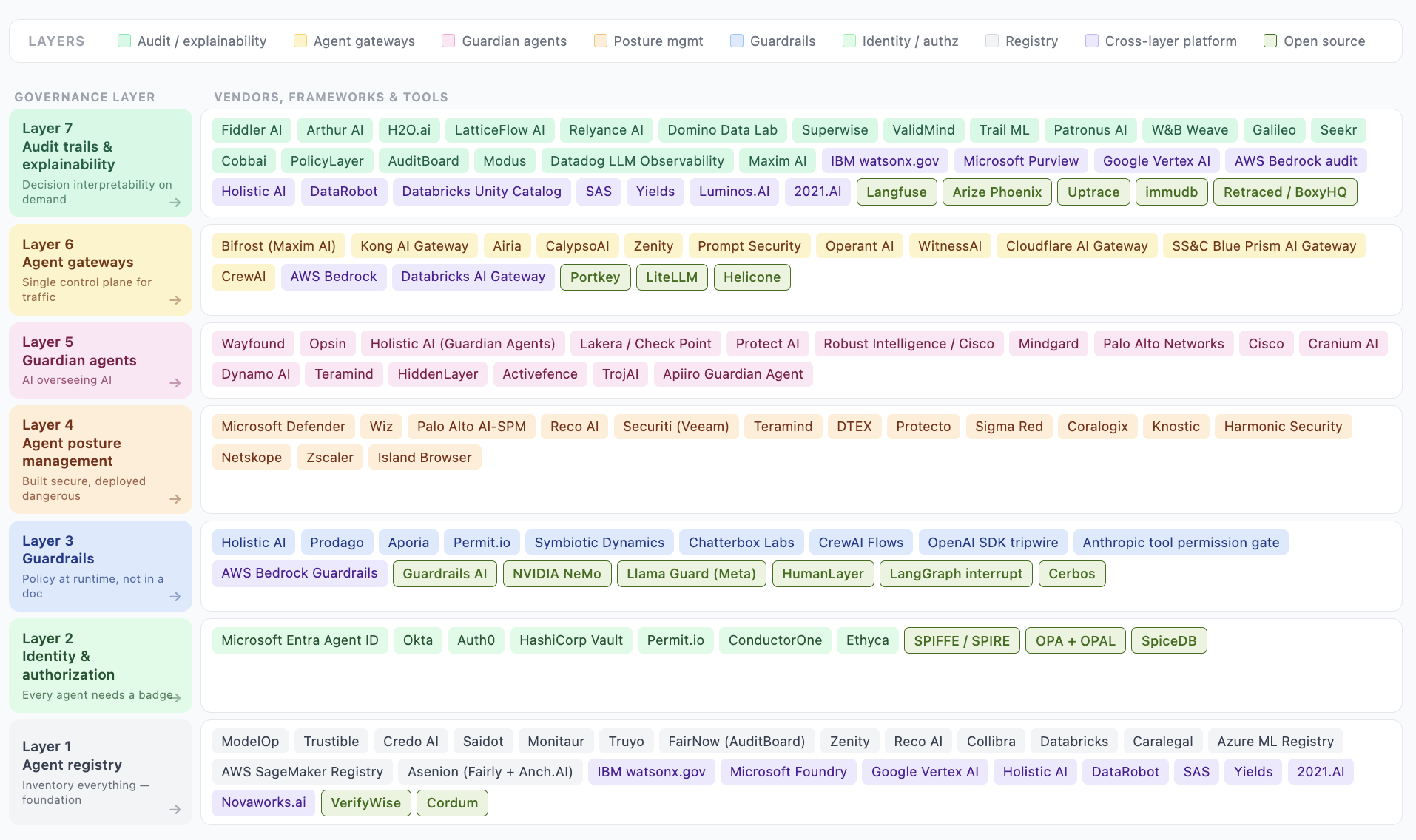

This is the capstone. The series argued how the stack should be built. This essay shows what the market looks like from outside, organized by where in that stack each vendor actually plays.

Why the Existing Taxonomies Miss the Point

Every major analyst taxonomy for this market organizes vendors by what they sell. Gartner’s Market Guide for AI Governance Platforms groups vendors by capability features: AI inventory, risk management, policy enforcement, observability, audit. The IAPP 2026 Vendor Report organizes by service type: policy and compliance, technical assessments, assurance and auditing, consulting and advisory.

Both are useful as procurement guides. Neither is useful as a governance framework.

Here’s the problem they share: they organize the market by product type rather than by accountability layer. A vendor that helps you document your models is in the same taxonomy as a vendor that intercepts your agents’ tool calls at runtime. Those are not the same problem. They don’t overlap in any meaningful way. Putting them in the same category, “AI governance,” creates confusion for buyers who think they’ve addressed governance when they’ve only addressed one layer of it.

The result is what you’d expect: organizations with comprehensive model documentation and no runtime controls. Organizations with strong policy engines and no agent inventories. Organizations that can tell you their model’s bias evaluation score but cannot tell you what their agents accessed in production last Tuesday.

The taxonomy problem is the governance problem. Fix the taxonomy, and the gaps become visible.

Governance Layers, Not Product Categories

The framework underneath this map is the seven-layer governance stack the series has been building toward, plus two structural elements that sit outside the seven layers: the accountability layer above and the discovery prerequisite below.

Discovery (pre-Layer 1). You cannot inventory what you cannot see. Article 9 made the case that shadow AI is not a Layer 1 problem. It’s the prerequisite to Layer 1. Eighty percent of workers use unapproved AI tools. Seventy-three percent of workplace ChatGPT usage happens through personal accounts. The registry only governs the agents you know about. Discovery is the foundation the foundation rests on.

Layer 1 — Agent Registry. The system of record for the digital workforce: every agent, sanctioned and shadow, with owner, scope, data access, risk tier, and deployment status. The HRIS for digital workers.

Layer 2 — Identity and Authorization. Every agent needs a badge. Not a shared API key. Not a service account inherited from the developer who built it. A distinct identity with scoped access, time-bound credentials, and a revocation path. The five-protocol stack (PKCE for credential provisioning, DPoP for token binding, OAuth OBO for delegation, Token Exchange for trust boundary crossing, CAEP for real-time revocation) is the technical answer to the governance question: who is this agent, and who authorized it to act?

Layer 3 — Guardrails. Policy at runtime, not in a document. The practical framework for deciding which agent actions run autonomously and which require human approval before anything happens. Risk-tiered, not binary. The Cynefin framework maps cleanly here: simple tasks get full automation, complicated tasks get agentic AI with good guardrails, complex tasks need human judgment in the loop, chaotic situations get human control with AI as assistant.

Layer 4 — Agent Posture Management. The “built secure, deployed dangerous” problem. An agent that passes all pre-deployment checks can drift into dangerous territory through misconfiguration, scope creep, or gradual behavioral change. Posture management is continuous, not point-in-time. It’s the difference between a security audit and a security program.

Layer 5 — Guardian Agents. As the agent population scales, humans can’t monitor every transaction. The answer is AI oversight of AI. Uncomfortable, but probably inevitable. Guardian agents watch for behavioral drift, anomalous access patterns, and policy violations across the fleet. On February 25, 2026, Gartner published its inaugural Market Guide for Guardian Agents. That’s formal recognition the layer has graduated from a theoretical construct to a named, funded, analyst-tracked market.

Layer 6 — Agent Gateways. The single control plane for agent traffic. A centralized chokepoint where agent-to-tool calls, agent-to-agent communications, and MCP server connections are inspected, enforced, and logged. The gateway market has split into two camps: infrastructure-first vendors focused on routing, failover, and cost optimization, and security-first vendors focused on posture management, runtime threat detection, and policy enforcement. In practice, organizations layer both.

Layer 7 — Audit Trails and Explainability. Decision interpretability on demand. Not just logs. A reconstructable chain of reasoning: what the agent did, what data it accessed, what policy governed its action, who approved it, and why. The EU AI Act makes this non-negotiable for high-risk systems. The enforcement deadline is August 2026.

What “Agent Governance” Actually Governs

LangChain’s March 2026 analysis of agent harnesses offers a clarifying frame: Agent = Model + Harness. Everything that isn’t the model is the harness: system prompts, tools, MCP connections, orchestration logic, filesystems, sandboxes, middleware.

That distinction matters enormously for the governance taxonomy.

Most vendors in the “AI governance” market govern models. They manage model documentation, evaluate bias in model outputs, validate models against safety criteria, and generate audit artifacts about model behavior. That work is necessary. It is not sufficient.

Agents operate at the harness level. The enterprise risk lives in the harness. The tools the agent can call. The MCP servers it connects to. The sandboxes it runs in. The memory it persists across sessions. The orchestration logic that decides when to spawn sub-agents. Data exposure, unauthorized actions, accountability failures all happen here.

Model governance governs what you built into the weights. Agent governance governs everything in the harness.

The seven-layer governance stack is a harness governance framework. That’s what makes it categorically different from what the analyst reports currently describe. And that’s why most “comprehensive” AI governance platforms still leave organizations exposed: they govern the model and miss the harness.

There’s a further wrinkle worth naming. Research into harness architecture (Daily Dose of DS, April 2026) observes that well-designed harnesses get simpler as models improve. Useful primitives migrate from harness configuration into model behavior through post-training. Controls that were explicit, auditable policies in the harness become implicit model behavior. The audit trail disappears. The accountability chain breaks. Organizations that instrument their harnesses for governance now are capturing a record that will be progressively harder to reconstruct as the line between model and harness blurs. The window for legible harness-level governance is not permanent.

This is also why the governance stack must be additive and observable rather than structurally coupled to any particular harness design. Governance instrumentation that requires rebuilding the harness around it will be left behind as harnesses simplify. The governance layer must observe without requiring the observed system to be rebuilt.

The Market, Mapped by Layer

The map organizes the vendor landscape across the seven governance layers, the accountability layer above, the discovery prerequisite below, and the missing layer in the middle.

View the full AI Governance Market Map →

What the map shows

Layers 1 and 3 are the most mature. The agent registry space has the most commercial activity. ModelOp, Trustible, Credo AI, Saidot, Monitaur, Truyo, and the major platform registries from IBM, Microsoft, Google, and AWS are all competing here, with a significant open-source ecosystem (VerifyWise, Cordum) forming underneath. The guardrails layer is similarly competitive, with both commercial products (Holistic AI, Prodago, CalypsoAI) and substantial open-source tooling (Guardrails AI, NVIDIA NeMo, Llama Guard, LangGraph interrupt).

Layer 2 is occupied by IAM incumbents. Microsoft Entra Agent ID, Okta, Auth0, and infrastructure tools (HashiCorp Vault, SPIFFE/SPIRE) that were not designed for the non-human identity problem. They work, but with significant gaps around OBO delegation, PKCE for ephemeral agents, and CAEP for real-time revocation. No purpose-built agent identity vendor has emerged. This is the gap the IAM incumbents are racing to fill before someone purpose-builds it.

Layer 4 has split into two distinct posture problems. The original Layer 4 thesis (“built secure, deployed dangerous” for sanctioned agents) is still served by cloud security vendors (Wiz, Palo Alto AI-SPM, Microsoft Defender) extending into AI from traditional CSPM, plus behavioral analytics vendors (Teramind, DTEX) extending from the human-behavior-monitoring space. But Article 9 surfaced a second posture problem the analyst reports treat as separate: shadow AI discovery. Finding the agents that never got registered in the first place. A new vendor cluster has formed here. Knostic is the broadest pure-play, covering chatbot shadow AI, IDE extensions, MCP servers, and the agent supply chain. Harmonic Security uses small language models for content-aware analysis to distinguish harmless queries from risky uploads. Netskope and Zscaler are SASE/SSE incumbents extending their proxy infrastructure to AI traffic. Island Browser uses the enterprise browser as a discovery surface. AI-SPM governs the agents you registered. Shadow AI discovery finds the ones you didn’t.

Layer 5 went from zero to early-stage in a few months. The February 25, 2026 Gartner Market Guide for Guardian Agents marked the category’s formal birth. Wayfound is the clearest pure-play, purpose-built for business-user-accessible AI agent supervision and named by Gartner in the Business Alignment and Outcome Optimizers segment. Opsin focuses on the enterprise security angle: how agents interact with sensitive data. Holistic AI launched an explicit Guardian Agents product in 2026, positioning their Sentinel Agents (continuous observation) and Operative Agents (real-time intervention) as a dedicated Layer 5 capability. The security-oriented vendors (Lakera/Check Point, Protect AI, Robust Intelligence/Cisco, Mindgard, Palo Alto Networks) approach the same layer from an AI TRiSM angle. Gartner’s prediction is pointed: by 2029, independent guardian agents will eliminate the need for almost half of incumbent security systems protecting AI agent activities. That’s also a warning. The guardian agent vendors that emerge now will displace the security tools currently approximating the function. One gap worth naming: as organizations deploy guardian agents, they will need metagovernance. Governance of the guardians themselves. Gartner flags it as an emerging critical requirement that no one is addressing yet.

Layer 6 has more depth than the analyst reports reflect. The gateway market has bifurcated into infrastructure and security camps, and both are now well-populated. On the infrastructure side, Bifrost (Maxim AI) is the architectural reference: an open-source, Go-based MCP gateway and LLM router in a single control plane, fast enough that overhead is measured in microseconds at production scale across 20+ providers. Kong AI Gateway extends existing API management infrastructure to AI traffic. The natural choice for organizations already running Kong for REST APIs. Cloudflare AI Gateway adds managed edge deployment and unified billing across AI providers. Portkey and LiteLLM cover the observability-first and maximum-provider-coverage alternatives. On the security side, Zenity is the most capable, spanning agent discovery, AI Security Posture Management, and AI Detection and Response across SaaS, cloud, and endpoint environments. Its March 2026 Build Partnership with ServiceNow is a significant signal. Zenity’s agent security capabilities are now native to ServiceNow SecOps, feeding directly into AI Control Tower and security incident response workflows. That’s the datacenter parallel made concrete. The same platform enterprises used to solve ITSM and CMDB is now extending the same governance pattern to AI agents. MCP support has become table stakes. What remains genuinely unserved is Gartner’s “AI usage control (AI-UC)” category: a formal representative vendor for the full governance chokepoint, not just routing or security in isolation.

Layer 7 has begun to form, but the practitioner reality lags far behind. The category that didn’t exist a year ago now has named vendors. Seekr provides training data attribution and influence scoring, quantifying which training data shaped which output. Cobbai and PolicyLayer focus on agentic audit trails specifically: decision provenance, RBAC for agent actions, the kind of reconstructable record regulators ask for. Modus raised $85 million in April 2026 to expand AI-powered audit platform partnerships with accounting and audit firms. The largest funding signal the category has seen. AuditBoard’s acquisition of FairNow in October 2025 places legacy GRC firmly into the audit trail layer. The observability tooling (Langfuse, Arize Phoenix, W&B Weave, Datadog LLM Observability, Maxim AI) captures the technical trace. None of it solves the practitioner’s actual problem. Grant Thornton’s 2026 finding: 78% of senior leaders lack full confidence they could pass an independent AI governance audit within 90 days. The vendors are arriving. The audit infrastructure inside enterprises hasn’t caught up. The August 2026 EU AI Act enforcement deadline is the forcing function that closes that gap, willingly or otherwise.

Consolidation is accelerating. FairNow AI was acquired by AuditBoard in October 2025 (GRC absorbing AIGP, exactly as Gartner predicted). Securiti AI was acquired by Veeam for $1.7B the same month (data security absorbing AI governance). Modus raised $85M in April 2026, the largest single funding round the audit trail layer has seen. The Zenity/ServiceNow Build Partnership at RSA 2026 puts agent security capabilities natively inside an incumbent enterprise platform. The pattern across all four signals is consistent: adjacent markets are absorbing the pure-play governance vendors. Buyers should expect the field of independent options to narrow over the next 18 months, and capability differentiation to flatten as integration becomes the value proposition.

The cross-layer platform question

IBM watsonx.governance, Microsoft (Azure AI Foundry + Purview + Entra + Defender), Google Vertex AI, AWS, DataRobot, Databricks, SAS, and Holistic AI all claim multi-layer coverage. The IDC MarketScape (December 2025) positions Microsoft and Databricks as Leaders, with IBM, AWS, Google, DataRobot, Dataiku, and Holistic AI as Major Players.

Gartner’s most important observation about these platforms: claims that AI governance can be fully supported in one solution are frequently exaggerated. Most only cover parts of the AI lifecycle and lack the full-spectrum governance, risk management, and policy enforcement required for seamless execution.

That holds up against the layer taxonomy. The cross-layer platforms are strong on Layers 1 (registry), 3 (guardrails within their ecosystem), and 7 (model-level explainability). They’re consistently weak on Layers 5 and 6, guardian agents and gateways, because those layers are heterogeneous by nature. A cross-platform guardian agent or gateway that only covers one vendor’s agents isn’t a guardian or gateway. It’s a feature.

The Critical Whitespace

There’s one capability every vendor on the map is missing. Every one. Task queue and human review routing for agent actions in production.

This is not a gap in a single vendor’s product. It is a gap in the market.

The capability: a platform-agnostic system that routes risky agent actions to human reviewers with full context (reasoning traces, data accessed, risk scores, applicable policies), captures approve/reject/override decisions with rationale, maintains an immutable record of human decisions on agent actions, and works across a heterogeneous agent fleet rather than inside a single vendor’s ecosystem.

Call it the HITL control plane. The “ServiceNow for AI agents.” The operational layer between autonomous execution and human accountability.

It doesn’t exist as a commercial product.

The open-source ecosystem is assembling pieces. Temporal provides durable execution for long-running workflows with human signal patterns. HumanLayer provides an SDK for integrating human approval decisions into agent code. LangGraph’s interrupt/resume pattern gives agents a native pause point for human input. Windmill and Activepieces both have native approval workflow nodes. Cordum, open-sourced in January 2026, is a purpose-built AI agent governance control plane with a policy-before-dispatch model. None of them is the integrated platform with the registry, routing, review interface, and audit trail that operational human oversight of agents actually requires.

Credo AI is the closest commercial approximation. They have genuine HITL escalation workflows and Gartner calls them out explicitly for this. But they’re model-governance-first. Their HITL applies primarily to governance artifacts (use case approvals, model release sign-offs) rather than to agent actions in production (route this specific output from this specific agent to a human reviewer right now). That distinction is everything.

Holistic AI has moved closer with their Guardian Agents product. Sentinel and Operative agents together get partway there. But the governance is automated enforcement, not human review routing. Blocking a bad action and routing it to a human reviewer for an accountable decision are different things.

UiPath’s Action Center has the queue, the state machine, and the human approval primitives. It’s the right pattern, mature and production-tested. But it was built for RPA, not agentic AI. It doesn’t model reasoning traces, doesn’t integrate with modern LLM observability, and doesn’t govern a heterogeneous agent fleet across vendors.

ServiceNow AI Control Tower has both the queue concept and the approval workflow. The Zenity Build Partnership now feeds agent security signals directly into it. But the platform is ecosystem-locked. It governs the agents it can see beautifully. It doesn’t govern your Anthropic custom agent, your internal Python agent, and your vendor’s embedded AI simultaneously under one accountability record.

The gap is the combination: vendor-agnostic, operational, work-item-level human oversight of agent actions with full context, immutable audit trail, and cross-platform coverage. Each piece exists somewhere. The integrated whole does not.

One forcing function clarified itself recently. Anthropic changed its policy in April 2026: Claude subscriptions no longer cover usage inside third-party harnesses. Developers building on Claude must route through the API. The practical effect is that any governance platform built on a single-provider foundation just had its commercial model complicated by a provider’s policy decision. The governance layer that governs the digital workforce cannot be built on any one model provider’s terms. It has to be genuinely neutral. Switzerland in a provider war that is very much still being fought.

The Regulatory Forcing Function

The market will accelerate whether or not the product ecosystem catches up.

The EU AI Act’s high-risk provisions take full effect in August 2026. Penalties reach 35 million euros or 7% of global annual turnover. High-risk AI systems require mandatory human oversight mechanisms, transparency documentation, and audit trail capabilities that map directly to Layers 3, 5, 6, and 7 of the governance stack.

NIST launched its AI Agent Standards Initiative in February 2026. The comment periods closed in April. The initiative addresses identity, authorization, security controls, monitoring, logging, and incident response for AI agents. A direct mapping to Layers 1 through 4.

The NSA issued AI/ML Supply Chain Risks and Mitigations guidance in March 2026, recommending AI Bills of Materials (AIBOMs) as enterprise practice. The OWASP AIBOM project and the Linux Foundation’s AI-BOM with SPDX 3.0 are the emerging standards. Model provenance, dataset lineage, dependency mapping. Layer 1 requirements that will become procurement table stakes.

At the infrastructure level, standards are converging faster than vendors. MCP donated to the Linux Foundation in December 2025 (97 million monthly SDK downloads, all major AI providers now support it, scaled in 16 months). OpenTelemetry GenAI Semantic Conventions v1.37 is moving from experimental to stable, with Datadog’s native adoption as the tipping point signal. The Kubernetes AI Gateway Working Group announced in March 2026 is building standards for network-layer AI governance. When the CNCF, Linux Foundation, and Kubernetes ecosystem all organize working groups around the same problem, the problem is consensus infrastructure, not an emerging niche.

The window between now and August 2026 is the best possible moment for governance investment. Organizations that act now build infrastructure before compliance becomes mandatory enforcement. Organizations that wait build it under pressure, at higher cost, with less time to learn what works.

What the Map Tells You

Five things worth taking from a market map of a stack the analyst community hasn’t quite drawn yet.

The governance stack is not a single purchase. No vendor covers all seven layers adequately. The organizations that are genuinely ahead are buying best-of-breed across the stack and integrating, not buying one “comprehensive” platform and trusting the brochure. The cross-layer platforms earn their place in the architecture for the layers they cover well (typically 1, 3, and parts of 7). The gaps need to be filled deliberately. The gateway architecture specifically requires composition: infrastructure routing layered with security governance layered with observability. Not a single tool. A designed control plane.

Discovery is the foundation that the foundation rests on. The Layer 1 registry only governs the agents you’ve inventoried. The 80%+ of unsanctioned AI usage running through personal accounts is invisible to the registry. Article 9’s harshest implication: every layer above (the registry, the badges, the guardrails, the gateways) produces a false sense of completeness if discovery isn’t running underneath. The practitioner sequence isn’t Layer 1 first. It’s discovery first, then Layer 1.

The urgency gradient runs bottom-to-top, but the risk gradient runs top-to-bottom. Discovery and Layer 1 are where you start because nothing above them works without them. But the organizational risk concentrates in Layers 5, 6, and 7. The layers with the fewest vendors, the least mature tooling, and the largest gaps between what organizations need and what the market provides. Strategic priority is to build the foundation while actively planning for the upper layers. Don’t treat foundation completion as the finish line.

The practitioner-vendor gap is the actual story. The vendors are arriving. The audit trail layer that didn’t exist a year ago has named vendors today. The guardian agent layer Gartner now writes Market Guides about. The gateway market has split into camps and depth has accumulated in both. None of that has translated into operational reality at the average enterprise. Grant Thornton’s 78% number is the one that matters: 78% of senior leaders lack full confidence they could pass an independent AI governance audit within 90 days. The market has more answers than enterprises have implemented. The August 2026 deadline is what closes the gap, willingly or under enforcement.

The missing layer is also the opportunity. The HITL control plane (the vendor-agnostic operational layer for human oversight of agent actions) is the most significant commercial whitespace in the market. It will be filled. The question is whether it gets filled by a purpose-built platform that centers human accountability as the product value, or by an adjacent player extending an existing product surface. Credo AI, ServiceNow, UiPath, a major cloud vendor are all candidates. The architectural choices being made right now (how agent telemetry is structured, which standards get adopted, how the HITL workflow gets wired into the agent execution pipeline) will determine how that layer develops. The organizations building this infrastructure now are setting the terms.

Where This Series Lands

Nine articles built one argument: AI agents are workers, and the organizations that manage them like workers will outperform the ones that don’t.

The market map is what that argument looks like from outside. A landscape that’s more legible than the analyst reports suggest. Mature layers (registry, guardrails). Formerly empty layers that have filled in over a few months (guardian agents, audit trails). Incumbents holding ground from adjacent markets (identity, posture, gateways). And one structural gap that the entire ecosystem agrees exists but no one has filled (the HITL control plane).

A few things worth saying out loud as the series closes.

The category nomenclature is going to keep shifting. The analyst community calls this “AI governance.” Deloitte’s 2026 Tech Trends calls it the “silicon-based workforce.” McKinsey describes “the agentic organization.” Forrester predicts the top HCM platforms will offer “digital employee management” by 2026. HBR published “To Scale AI Agents Successfully, Think of Them Like Team Members” in March 2026. Whatever it ends up being called, the framing the series has been arguing for (manage them like workers, not features) is the one that’s converging.

The infrastructure is ready before most enterprises are. The standards are coalescing. The vendors are arriving. The regulatory deadlines are scheduled. The component pieces of a real governance stack exist. What’s missing in most organizations isn’t tooling. It’s the operating model that turns the tooling into outcomes. That’s the next problem after this series. It’s where attention should turn.

The window for foundational decisions is short. Organizations that build their governance infrastructure before August 2026 build it on their own terms, with time to learn what works. Organizations that wait will build it under audit pressure, in someone else’s framework, after the budgets have hardened. The cost of doing this right is highest when you’re starting late.

If a CTO walks away from this series with one practical thing, it should be this: the seven layers are not a checklist. They’re a sequence. Discovery first. Then registry. Then identity. Then guardrails. Then posture. Then supervision. Then gateways. Then audit. Each layer is a prerequisite for the one above. Skipping a layer doesn’t mean the layer isn’t there. It means you have an undocumented dependency on something you haven’t built. Most organizations are skipping layers right now without knowing it.

The map shows where the market currently sits. What the market becomes is up to the people building the next layer of it. The buyers who insist on real governance instead of governance theater. The builders who fill the gaps the analyst reports haven’t named yet. The operators who treat their digital workforce as a workforce.

That’s the work. And as of the close of this series, it’s everyone’s, not anyone’s in particular.

Managing the Digital Workforce | Capstone